Genuine cooperation between artificial intelligence & humans

There are close to eight billion people on this planet who have excellent intuitive understanding of human motion. By creating a series of video games to crowd-source user knowledge of locomotion, we aim to develop a knowledge-base of kinetic data that will be used to train the next generation of humanoid robots.

We aim to create a future in which humanoids and humans can collaborate meaningfully. By gamifying the robotic training process, we allow everyone to participate.

Human centered AI Engine

We are heading towards a future in which humanoid robots will become an essential part of our day-to-day lives. We developed the Mollia AI Engine to make it easy for people to collaborate with robots.

Adaptive & Trainable

Highly adaptive and trainable software for the kinematic control of our virtual humanoid robot, called Babu.

Realistic Training Environment

The current virtual training environment is implemented in Bullet Physics which we keep within realistic settings.

Platform technology

The software will come with a toolkit for developers, creating the path to many novel kinematic AI-based applications.

Language-like learning system

That describes motion in terms of a unified system of geometric building blocks (words) and a set of high-level rules (grammar).

Skill transferability

We developed a more natural approach to robotic intelligence that is able to possess highly transferable kinematic skills.

Generative learning model

The learning process records principles, therefore giving Babu the ability to generate its own kinematic solutions to problems.

Let's build this exciting future together! Join the community

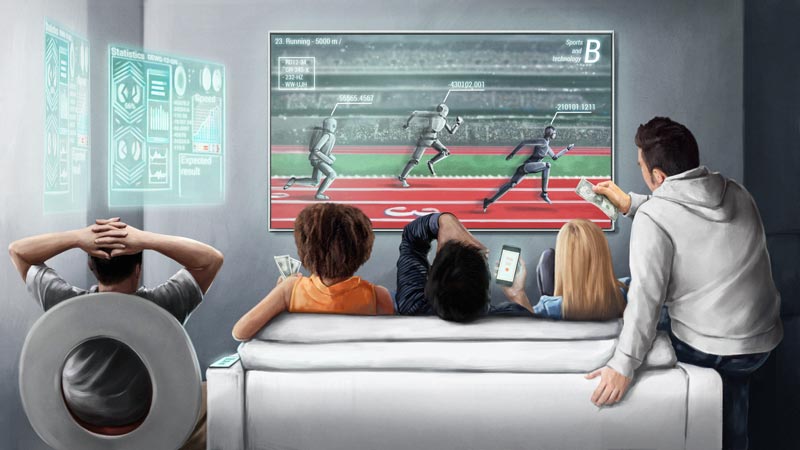

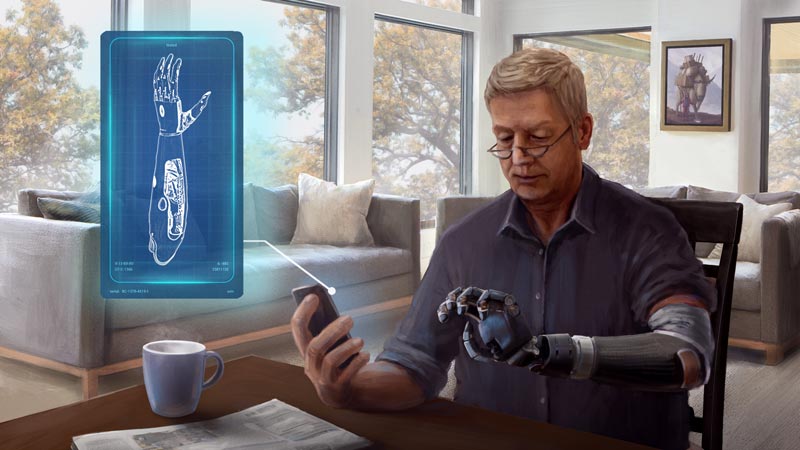

APPLICATIONS OF MOLLIA AI

Our technology has a range of use cases in such industries as entertainment (video games, e-sports, film), robotics (humanoid, industrial), healthcare (orthopedic, exoskeletons), space exploration, and defense.

Phase 1 - Video games

AI 3.0 MEETS WEB 3.0

Bringing a robot into everyone's home to train them is not feasible. However, they can be trained in a virtual environment, through video games. Since this project involves community-based training, it is a natural fit with Web 3.0, blockchain, NFTs, and play-and-earn games. By gamifying the training experience, we can entertain people and enable them to earn money.

Request a demo

Phase 2 - Data Collection

AI & ROBOTICS - CROWD-SOURCED KINETIC DATASET

Adaptive and trainable robots have many industrial applications, such as when work must be carried out in hazardous environments. We can use the user-generated motion data from the video games to improve the kinematics of physical humanoid robots. Moreover, the best virtual robot trainers might find themselves

landing a job at robotics companies to train their actual hardware.

Learn more

Phase 3 - Platform technology

BENEFITING OTHER INDUSTRIES THROUGH COLLABORATION

Eventually, we plan to provide access to the technology through an API that will be useful for other industries, such as healthcare and space exploration. A robotic prosthetic part, for example, could be trained to function similarly to the patient's own body. Exoskeletons could be train to adopt to the unique motion of their users. etc.

Let's collaborate

We strive to achieve a qualitative change in human-machine interaction. Check out how we intend to make it happen.

Download our Vision Deck

Leadership Team

Great things in business are never done by one person. They're done by a team of people. Mollia's founding team has over 100 years of combined experience in venture building,

mathematics, psychology, software development, and finance.

Daniel Vincz

CEO

Daniel is a serial entrepreneur and computer engineer. Along with other businesses, he co-founded the INPUT Program, a program supporting Hungarian startups that was awarded UN Global Best practice award in 2018. He received the ‘Legend Award’ in Malaysia in 2019 and the ‘2050 Youth Award’ in China in 2020.

Ignac Siba

COO

Ignac is a serial entrepreneur, angel investor and economist, who used to be the regional CFO at Citigroup at CEEA. After his Citi career, he was managing director at the Economic Development Operational Programme, responsible for deploying $3.6 billion. He is also a board member of multiple companies.

Andras Joo

CHIEF ARCHITECT

Andras holds a PhD in Psychology. He is a serial entrepreneur who has successfully launched and sold multiple businesses. Previously, he was Head of the Laboratory at the Hungarian National Institute of Psychology. Andras developed the mathematical foundation of Mollia, with the initial idea dating back to 1998.

Daniel Joo

CTO

Daniel holds a PhD in Mathematics. He has been a Researcher at Alfréd Rényi Institute of Mathematics of the Hungarian Academy of Sciences since 2014 and has published numerous articles in international publications. He is the lead developer of the core mathematical models for Mollia AI.

WE ARE HIRING

Interested in robotics and video games and passionate about redefining what's possible? We want to hear from you!

Let's disrupt robotics together by using video games and give back the power to the people through web 3.0.

Get in touch

Development Milestones

You can follow the development of our virtual robot, Babu, from the very beginning. It's been a long and rocky journey so far, with lots of dead ends and failures, but we did not give up and neither did Babu, so today, Babu is capable of learning from humans. And this is just the beginning.

1. Setting up the training environment

The first step was to design the virtual training environment for our algorithms in a physics simulation software (Bullet Physics). We chose parameters (e.g., gravity or the motor impulses in the robot) to recreate realistic circumstances. We constructed the initial basic kinematic kit for our simulated robot, Babu.

Slide right to see more. >>

2. The very first steps for our virtual robot, called "Babu"

We started with very simple models and moved towards more complex ones. We’ve also built a developer interface for the supervised part of the learning process. We were implementing the very first algorithms so that our robot could practice and improve its previously learned skills in an unsupervised fashion.

3. The development path of a human baby

To get to the first steps of Babu, we closely followed the development path of a human baby learning to walk on two legs after becoming aware of its limits and learning to balance its core, muscles, and movements. Once the core skills were sufficiently developed, the unified and hierarchical system allowed Babu to build on it autonomically.

4. Automatic adaptation to altered body types

This process not only improves efficiency but also finds a large amount of style variations that all retain bipedal balance. In the current stage of development Babu is already able to adapt his skills to new circumstances such as a different constitution, or special tasks like walking in high heels.

5. Manipulating heavy objects in the virtual space

Babu can manipulate objects of different weights. In this video, the luggage weighs around one-third of the weight of Babu. Note that Babu does not need to stop and get into a 'lifting' position when picking up the luggage. The AI takes these into account when establishing bipedal balance.

6. There is no learning without failure

We implemented several training rooms for Babu to practice his skills under variable circumstances. These setups often require him to react to random events that are unpredictable for the AI. These “training sessions” serve to improve the adaptivity of Babu’s kinematic kit. Of course, there is no learning without failing.

7. Creative kinematic intelligence training

The high degree of adaptivity reached during these sessions is a critical component of making Babu interact with users. It means that Babu becomes able to follow real-time kinematic instructions coming from a VR or motion capture device while retaining the key elements of his own set of moves that are necessary to maintain his balance.

8. Building stability for real-time interaction

The following scenes demonstrate real-time interaction with Babu. The resulting motion always manifests as a combination of previously obtained skills and an intent to adjust to user input. In this video, Babu has already learned how to move his weight from one foot to another and execute a periodic base stance that behaves well under random permutations. This stability is essential for achieving steady control during the interaction.

9. Teaching Babu - the beginnings

The main thing that sets apart our design from usual computer gaming control is that one cannot directly force its will on what is happening on the screen, since it must be consistent with both the laws of the simulated physics and Babu's existing kinematic kit. The teaching of Babu therefore begins with a learning process for the user itself, whereby the user has to explore how the system reacts to a variety of inputs.

10. The user and the AI create new moves together

Input at this stage is captured with its full dynamics, and Babu can sensitively react to small changes in velocity or position. When control is executed in good synchronization with Babu's motion, the user and the AI can form new moves in cooperation, leading back to another stable periodic state. Collaboration requires learning from both the human and the AI.

11. Turning external control to internal control

After reaching a suitable degree of stability, we can turn the external control of Babu into internal control. In other words, Babu records the moves shown to him into his kinematic kit and can reinforce them via unsupervised training. Symbolic commands can later invoke these moves without showing the exact dynamics again.

12. Teaching increasingly complex motions

Improvements on the base kinematic kit allow us to teach increasingly complex motions to Babu, including manipulating objects during his exercises or adjusting to challenging environments during the interaction. Eventually, we even managed to teach Babu to do a proper handstand.

13. Babu meets MaxWhere - virtual 3D Mollia showroom

We created a MaxWhere showroom to showcase Babu's abilities. MaxWhere is a universally available and easy-to-use 3D virtual platform with a captivating visual world, proven to support the reception and comprehension of virtually shared information and improve collaboration. Collaboration is king.

14. Prototype game - multiplayer simulation in the browser

Our proof-of-concept game demonstrates the capabilities of the technology. Humans and AI need to work together to achieve a common goal. There was a lot of background work to ensure the game was accessible from a web browser. We can simultaneously simulate up to four robots, enabling the user to have a friendly multiplayer tournament.

Let's Start The Conversation

As Alexander Graham Bell put it: "Great discoveries and improvements invariably involve the cooperation of many minds."

If you are interested in AI, robotics, gaming, e-sport, blockchain, NFT, business, marketing, startups, or just want to have a chat, let's start the conversation.